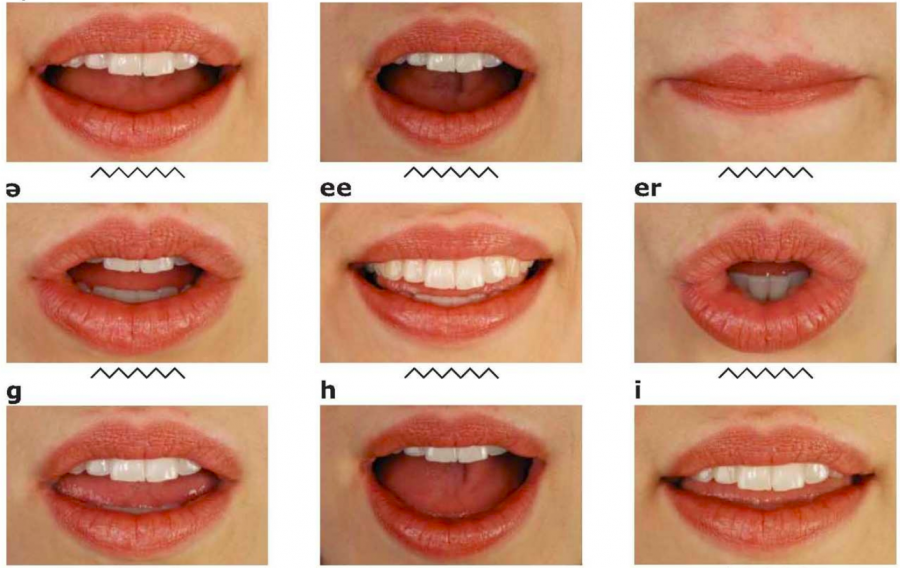

With all of that having been said and now out of the way, it is important to note that the results that have come in for AV-HuBERT seem to be rather positive with all things having been considered and taken into account. Monitoring the movement of lips could add another form of input that may very well boost the ability of AI to understand human beings and to contextualize their words thereby enabling said AI to perform tasks in a much more efficient manner after it has been fully trained. AUTOMATED LIP READING SOFTWARE SOFTWAREPreviously, voice and speech recognition software has operated on an audio only basis. What Meta is basically trying to do is to see if anything can be gained by allowing AI to read lips as well as listen to audio recordings and the like. Meta has developed a new framework called AV-HuBERT that will take both factors into account because of the fact that this is the sort of thing that could potentially end up vastly improving its speech recognition potential, although it should be said that this is only a test at this point. Reading someone’s lips can also be a crucial aspect of this since it can help you parse the meaning of their words in situations where you might not be able to hear them all that clearly, and that is something that Meta seems to be taking into account when it comes to their AI.Ī lot of studies have revealed that it would be a lot more difficult to understand whatever it is that someone is trying to say if you can’t see the manner in which their mouth is moving. The previous 86.4% word-level state-of-the-art accuracy (Gergen et al., 2016).The main technique that is used during face to face communication is speech, but this involves a lot more than just listening to the words that people say. Overlapped speaker split task, outperforming experienced human lipreaders and On the GRID corpus, LipNet achieves 95.2% accuracy in sentence-level,

Model that simultaneously learns spatiotemporal visual features and a sequence To theīest of our knowledge, LipNet is the first end-to-end sentence-level lipreading

Text, making use of spatiotemporal convolutions, a recurrent network, and theĬonnectionist temporal classification loss, trained entirely end-to-end. Present LipNet, a model that maps a variable-length sequence of video frames to Shown that human lipreading performance increases for longer words (Easton &īasala, 1982), indicating the importance of features capturing temporal context However, existing work on models trained end-to-end perform only wordĬlassification, rather than sentence-level sequence prediction. More recent deep lipreadingĪpproaches are end-to-end trainable (Wand et al., 2016 Chung & Zisserman,Ģ016a). Or learning visual features, and prediction. Traditional approaches separated the problem into two stages: designing AUTOMATED LIP READING SOFTWARE PDFAssael, Brendan Shillingford, Shimon Whiteson, Nando de Freitas Download PDF Abstract: Lipreading is the task of decoding text from the movement of a speaker's

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed